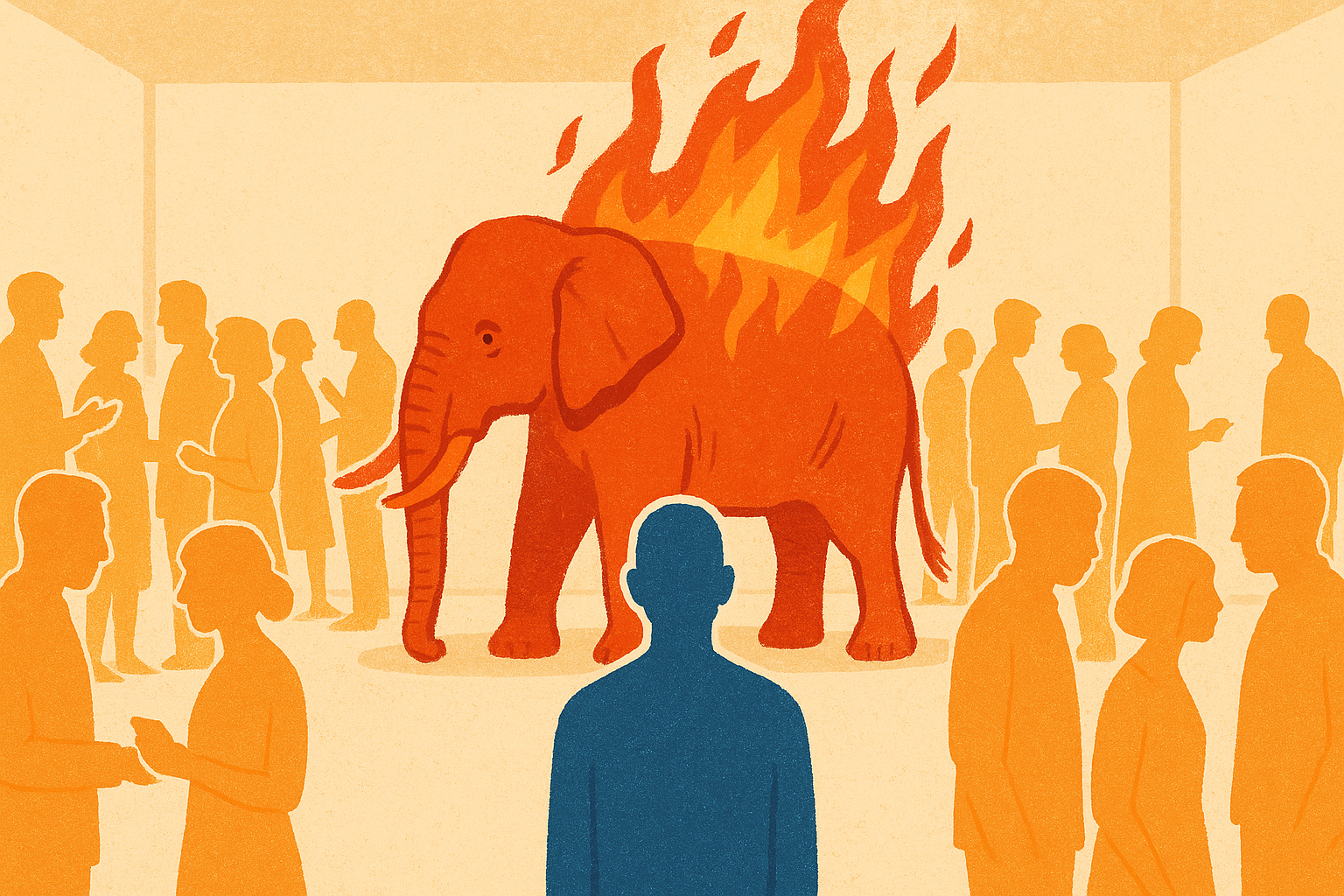

The Flaming Red Elephant in the Room

The obvious political reality that AI discourse keeps refusing to name. Red for the communist-revolutionary spirit rising in a generation locked out of the economy. Flaming for the firebombs already being thrown at AI executives and data centers. Elephant for the simple fact the cause is obvious and nobody at the top of the industry wants to look at it.

There is an obvious thing sitting in the middle of the AI conversation in 2026 that almost nobody with a podium wants to name.

A generation of young people has been told publicly, by the people building AI, that they are about to become economically "useless." That generation already cannot afford rent. They have watched every institution they were supposed to trust fail to steward society in their interest. Now the same tech elite is pouring hundreds of billions into infrastructure sited in communities those communities did not want, while selling investors on AI as the thing that will "take your job, be smarter than you, take your girlfriend, and ruin your life."

The result is exactly what a careful observer would predict. Firebombs. Shot-up councilmen's houses. Comment sections cheering it on. A growing share of young people convinced that stopping AI by any means necessary is a heroic calling.

AAS calls this the Flaming Red Elephant in the Room. Three parts, each one obvious once stated, each one actively ignored by AI industry leadership and AI doom leadership both.

Red

Red is the color of the communist-revolutionary spirit rising in a generation that feels abandoned by every existing institution, and the rise is understandable.

When the people at the top of the wealth distribution publicly describe you as "useless," and the companies building the biggest new technology in the world market it as the thing that will ruin your life, the red rising in response is a predictable historical pattern in every similar moment. Dismissing it as fringe, conspiracy, or adolescent is what the people who caused it would like everyone else to do. It is also what guarantees the spiral accelerates.

The political energy of 2026 is materially more desperate and ideologically more radical than the energy of any decade in recent memory, and the radicalism is showing up on both ends of the horseshoe. Underestimating that is how institutions fail in the years ahead. See The Elevator Economy for the foundational economic observation underneath this, and Why Young People Are Right To Be Angry for the material receipts.

Flaming

Flaming is the literal fire. It is not rhetoric. It is the Molotov cocktails thrown twice in two weeks at Sam Altman's house. It is the thirteen rounds fired into an Indianapolis city councilman's home over a data center vote with an eight-year-old asleep inside. It is the cheering in the comments under every news article covering those events. It is what is next.

Dylan Patel, founder of SemiAnalysis, on Patrick O'Shaughnessy's podcast (clip) named the trajectory directly:

"I think there will be a large-scale protest against Anthropic. End up at AI. People hate AI. AI is less popular than ICE, less popular than politicians. With Anthropic adding so much revenue, that's going to start causing business changes downstream. People are going to get more and more scared of AI. They'll start blaming more and more of their own problems and things that have been deep-seated problems for a long time. Those will bubble up and be blamed on AI. Probably some politician or influencer will be able to start taking and weaponizing AI against people. You look at the comments of news articles where Sam Altman had a Molotov cocktail thrown in his house twice in like two weeks. They're like, people are cheering it on. And this is just the beginning. So I think we'll see large-scale protests against AI in three months."

Patel's advice to the AI industry in the same interview is worth quoting alongside it:

"First of all, Sam Altman and Dario have to stop getting on interviews. They're so uncharismatic. Every interview they do is like, wow, normal people are going to hate you even more. Sam being on Tucker Carlson probably made all Republicans hate OpenAI. And same with Dario. They just have no charisma. That's first. Two, they need to start showing uplifting things that can be done with AI. Three, they need to stop talking about how the capabilities are going to change the whole world constantly, because then people are going to get fear of that capability, because they have no connection. The average person doesn't know an Anthropic employee. The average person doesn't know an OpenAI employee. They just view them as this sneaky cabal of 5,000 people at this company that are going to change the world and automate all the jobs and destroy society, and as people who are funding the building of all these data centers and power plants that are going to pollute the world. I think it's a huge reorg and rebranding that needs to be done."

The fire is no longer hypothetical. The question every AI institution has to answer in 2026 is what it is actually doing about the conditions underneath the fire, and whether its public posture is pouring water on the flames or kerosene.

Elephant

Elephant is the fact that the cause is obvious and the industry will not say it out loud.

The polite version of AI discourse treats the field as a meritocratic collaboration of curious researchers. The actual top of the industry is steered in meaningful part by a small class of transhumanist billionaires whose public statements have equivocated on whether ordinary human flourishing is even the point of AI. Several are personally entangled in the Epstein scandal. Some openly hold a "religion of intelligence" worldview in which AGI is a successor species rather than a tool built for human service. None of this is hidden or obscure. It is on the record in their own books, interviews, and open letters.

The AI doom movement on the other side of the conversation is funded by money that overlaps with these same networks. The two loudest sides of the AI debate trace back to a remarkably small set of people whose incentives diverge sharply from the public's. One side tells ordinary people they will be economically useless. The other side tells them the whole project is extinction-level evil. Both sides are currently speaking past the actual twenty-year-old trying to afford rent.

That is the elephant. We cannot have an honest conversation about applied AI's promise for humanity while pretending the top of the industry is stewarded by people who unambiguously want that outcome. The AAS position is that naming the elephant is the first honest move available. Closing the exclusion is how the elephant stops being on fire.

Why naming this matters

Every AAS member who runs a workshop, hosts a chapter meeting, publishes a case study, or has a conversation about AI in public is operating in a political environment shaped by the Flaming Red Elephant. Pretending the landscape is neutral is a posture that only works for people already inside the gates.

Some industry voices are starting to name it. Will Manidis, in an April 2026 X thread, called out Meta specifically:

"its impossible to overstate how well facebook is doing on comms surrounding data centers and models right now. offer jobs and training, clearly state the truth over water and power usage, don't do doomer fiction. just a relentless execution of the basics. no one else is even close."

And in a follow-up:

"not getting your data centers blown up is the only factor input to scaling laws that still makes this game a toss up."

That second line is the elephant named from inside the industry. The physical security of the AI buildout is now a scaling-laws-level input, sitting next to compute, data, and algorithms on the list of things that determine whether the whole project works. The inputs to that security are not technical. They are political and relational: jobs in the communities hosting the infrastructure, honest public communication about water and power, the absence of doomer framing, and a visible path for ordinary people into the economy the buildout is enabling.

Every one of those inputs is what AAS has been doing at the community level from day one. The Jarvis workshops, the chapter network, the public documentation, and the careers documented in the applied AI economy are literally the "jobs and training" side of Manidis's prescription, delivered at the ground level rather than by corporate comms.

The AAS counter-move is not to pick a side between the doomers and the techno-utopians. It is to close the actual exclusion that both sides feed on. Every person Jarvised with a working Personal Agentic OS is one less recruit for the stop-AI movement and one less data point for the "useless class" thesis. The fleet of arks, built one at a time, is the intervention that drains both reservoirs at once.

See Either We Jarvis The World, Or AI Is Doomed for the full strategic frame this concept lives inside.

Further Reading

- Either We Jarvis The World, Or AI Is Doomed: The strategic frame this concept lives inside.

- The Hyperagency Gap: The defining inequality the elephant is fueled by.

- The Elevator Economy: The material reality underneath the political rage.

- The New Flood: The omni-crisis landscape this fire is burning inside.

- Inclusive Technological Advancement: The design principle that actually closes the exclusion.

- Regenerative AI Advancement: Data centers as restoration infrastructure instead of extraction infrastructure.

- RIP To The Career Ladder: The labor-market reality underneath the anger.

- Garry Tan: From AI Doomerism to Molotov Cocktail: Reporting on the firebombing pipeline.