Either We Jarvis The World, Or AI Is Doomed

The more people feel left out of the AI revolution, the more they will glorify stopping it. Lock a generation out of AI literacy and economic hope, and they will join movements that glorify stopping AI by any means necessary. The violence of 2026 is the predictable consequence. The intervention that actually works is getting as many people as possible Jarvised, as fast as possible, and letting them build from there.

What We Actually Believe About AI

The Applied AI Society is bullish on AI. This entire organization exists because we believe that creative human service, augmented by applied AI, can solve the civilizational problems this generation was handed and did not cause. Climate. Healthcare collapse. Housing. Education. Mental health. Community decay. The tooling to rebuild civilization at a scale that matches its actual needs exists for the first time in human history, and we are living through the first ten years of it.

That is the promise. It is real.

The promise is also conditional. Every good thing we believe about AI depends on four things being true at the same time:

- The narrative around AI, and the lived reality for ordinary people inside that narrative, must change. People who are watching the tools make billionaires richer while their rent keeps rising are living evidence that the promise is currently a lie. The promise has to become real in their actual lives first.

- The industry must prioritize ecological regeneration at the same weight as capability progress. Data centers without a restoration agenda are political landmines. The "No Data Centers" note left at a councilman's shot-up house was grief about the land underneath the anger.

- Applied AI literacy has to reach everyone, fast. The hyperagency gap has to close faster than the backlash grows.

- The industry has to stop talking about humans as future charity cases and start treating them as co-authors of what comes next.

If these conditions hold, AI delivers. If any of them fail, AI is doomed. Not in the doomer sense that the machines kill us. In the political sense that the backlash from the excluded forecloses the good futures before they can be built.

Here is the important clarification most AI discourse gets wrong. We are not building a single civilizational ark. Nobody should trust a single institution with that mandate, including ours. We are building the infrastructure and the culture for a fleet of arks, each one owned by the people who built it. The simplest ark is an individual who has been Jarvised: their own Personal Agentic OS, their context, their compounding system, their life and work. Every Jarvised person is an ark of one. The community of them is the fleet. See Minimum Commercial Viability for why clearing that floor is survival-level infrastructure for a commercial actor in 2026. Above it, they are competitive and compounding. Below it, they are racing to catch up against people who have already crossed.

Every person who builds an ark of any size is a person the doom pipeline will struggle to recruit, because the material and the psychological exclusion are both closed at once. The rest of this piece is about the pipeline that is currently winning because the fleet is not yet large enough.

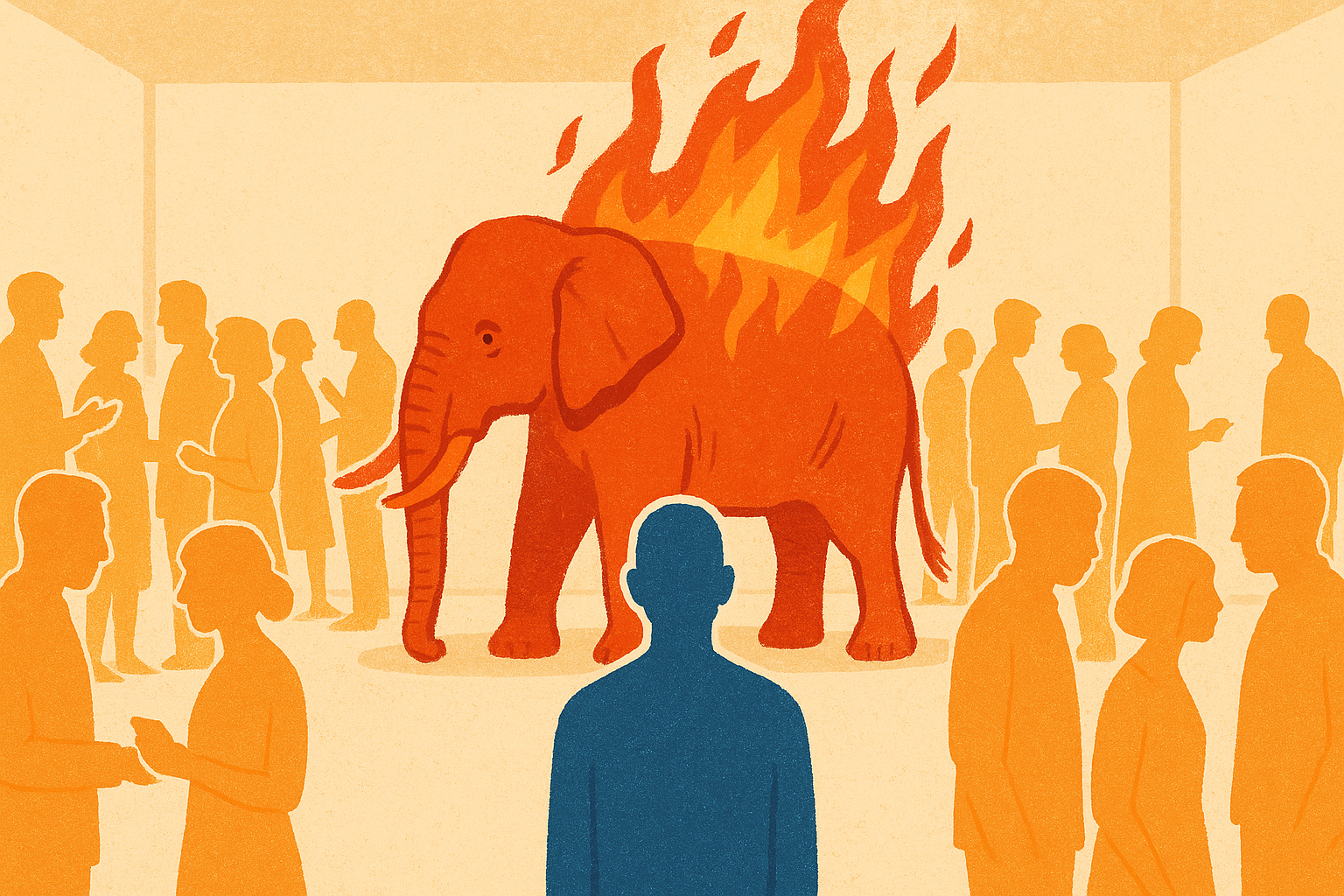

The Flaming Red Elephant

Before the pipeline, there is a flaming red elephant in the middle of the AI conversation that almost nobody wants to name.

Polite AI discourse treats the industry as a meritocratic collaboration of curious researchers. The reality is that the top of the industry is steered in meaningful part by a small class of transhumanist billionaires whose public statements have equivocated on whether ordinary human flourishing is even the point of AI. Many of them are personally entangled in the Epstein scandal, which ordinarily disqualifies anyone from stewarding anything. Some openly hold a "religion of intelligence" worldview in which AGI is treated as a successor species rather than a tool built for human service. None of this is hidden or obscure. It is on the record, in their own books, interviews, and open letters.

The AI doom movement on the other side of the conversation is funded by money that overlaps with these same networks. The two loudest sides of the AI debate trace back to a remarkably small set of people whose incentives diverge sharply from the public's. One side tells ordinary people they will be economically useless. The other side tells them the whole project is extinction-level evil. Both sides are currently speaking past the actual 20-year-old trying to afford rent.

That is the elephant. We cannot have an honest conversation about applied AI's promise for humanity while pretending the top of the industry is stewarded by people who unambiguously want that outcome. The AAS position is that naming this is the first honest move available. Closing the exclusion is how it gets fixed.

The elephant is flaming red for three reasons.

First, it is on fire, and nobody wants to look at it.

Second, red is the color of the communist-revolutionary spirit rising in a generation that feels abandoned by every existing institution, and the rise is understandable. When the people at the top of the wealth distribution tell you in public that you will be economically "useless," and the people who own the biggest new technology market it as the thing that will "ruin your life," the red rising in response is a predictable historical pattern in every similar moment. Dismissing it as conspiracy or fringe is what the people who caused it would like to do. It is also what guarantees the spiral accelerates.

Third, red is the literal color of the firebombs already being thrown at the physical symbols of that system. Altman's house. Data centers in small towns. The next target. When a population decides that the institutions in front of them are actively sabotaging their agency and their future, some fraction of that population will set those institutions on fire. That trajectory is what 2026 is showing. It does not stop on its own. It stops when the underlying exclusion stops.

The faithful response is to address what caused the flames. Dismissing the people carrying them is how the country ends up with many more flames, and eventually with a grid that actually goes out.

The Pipeline

On April 10, 2026, a 20-year-old threw a Molotov cocktail at Sam Altman's house and walked to OpenAI headquarters carrying a list of AI executives. His name is Daniel Moreno-Gama. He was a PauseAI member who held six community roles there. Months earlier he had written in public that AI-caused human extinction was "nearly certain" and that AI builders were attempting mass murder.

Four days before that, in Indianapolis, an assailant fired 13 rounds into a city councilman's home over a data center vote. An eight-year-old was asleep inside.

These events are the downstream output of a simple pipeline:

- A generation of young people feels materially locked out of a functional life in their country.

- A parallel class of billionaires has spent the same decade telling that generation, in public, that most of them will soon be economically "useless."

- A well-funded doom movement has offered that same generation a moral framework that says AI is the cause of their despair and stopping AI is a heroic calling.

- Some of them take it seriously enough to act.

The pipeline is a recruitment funnel operating in broad daylight. The longer people feel excluded from the AI revolution, the larger and more violent the stop-AI side of that funnel becomes. This is a structural observation, not a moral one.

Why Young People Are Right To Be Angry

Before anything else, the material reality has to be named.

Gen Z inherited an unaffordable country. The average American cannot reliably cover a small emergency expense. Rent, education debt, healthcare, and basic cost of living have decoupled from wages for a generation. The promises embedded in the American dream, follow the path, trust the institutions, get the degree, work hard, inherit the stability, have not paid off for most young people. They have watched their parents work hard and still not arrive. They have watched older generations pull the ladder up behind them.

The inequality underneath this is unbelievable by any historical standard. AAS has a name for the dynamic: the elevator economy. Some people and companies are going up. Everyone else is going down. There is no standing still. Conservatives sometimes call this the K-shaped economy, but K implies two stable trajectories. The reality is closer to an elevator with a cut cable: you are either ascending to infinity or in free fall. This is the foundational economic observation of the moment, and everything else in this essay builds on it. See Hyperagency and The Hyperagency Gap for why the ascent side of the elevator compounds so hard.

Then applied AI arrived on top of that. They are reading in the same news cycle that Anthropic's CEO expects the entry-level job market to collapse, that tech executives use the phrase "useless class" in serious conversations about the future workforce, and that the companies building these tools are worth trillions while the public services in their neighborhoods decay.

The AI industry also softened the target with its own marketing. The commentator known as Threadguy, writing on X after Sam Altman's house was attacked twice in one week, put it directly:

"Their entire marketing pitch over the last three years has basically been 'the product I'm building is going to take your job, be smarter than you, take your girlfriend, and ruin your life.' That's been the approach to raise as much VC money as possible."

"I don't think we've accounted for how many people are going to revolt against the narrative being pushed by AI. I don't think they understand how much people care about their jobs, how desperate most of the world is right now, and the steps they're willing to go to to make sure they keep their shit."

That is the industry's own marketing, played back with the consequences attached. Three years of press releases that treated human displacement as a selling point pre-wired the public to believe that AI leaders were the adversary. The doom movement did not have to manufacture that framing. The industry did it first for VC money, and the doom movement walked in afterward and gave the rage a moral story.

The anger is legitimate. The question is what gets done with it. Right now, the loudest answers being offered to this generation are engineered to make the anger worse while redirecting it toward targets that do nothing to improve anyone's material conditions.

The deeper cause underneath the anger is decades of failed stewardship by the class of people who were supposed to be stewarding society in the interest of everyone. Blame the social media barons, blame the AI doomers, blame the specific villains people are naming right now. Those blames are fine as far as they go. But the underlying reason a generation is fed up is that the so-called elite of the last forty years did not steward the development of society in anyone's interest but their own, and everything downstream of that failure, including the arrival of applied AI into a pre-existing fire, is playing out exactly the way it would be expected to.

We can change that. Bringing AI to every person as a tool for service of humanity is the intervention that actually changes it. Everything else is venting.

How The Doom Movement Converts Anger Into Violence

The first loud answer is that AI is an extinction event and the righteous response is to sabotage it.

This position has graduated from a small Discord subculture into a nine-figure advocacy ecosystem. The Future of Life Institute alone received a cryptocurrency donation reportedly worth over $660 million. Over a billion dollars has flowed through the AI existential risk ecosystem to fund open letters, Discord servers, academic chairs, media placements, and community roles for members like Moreno-Gama.

Credentialed intellectuals have said on the record that the expected outcome of AI development is that "literally everyone on Earth will die," called for airstrikes on data centers, and suggested that legal AI development is morally equivalent to mass murder. National-audience publications have given them long, sympathetic interviews. Politicians have cited their claims as grounds for regulatory positioning.

The violence is what the ideology promised. A movement that tells a 20-year-old that AI developers are attempting extinction-level mass murder does not get to act surprised when a 20-year-old concludes that self-defense is warranted. After the firebombing, PauseAI deleted the attacker's messages from their server and moved on.

The recruitment works because the targets have already been softened. A young person who cannot afford their rent, cannot see a future in any traditional career, and has been told by the tech elite that they will soon be "useless" is exactly the person most susceptible to a moral story that says their anger has a righteous target. Doom gives them a villain, a purpose, and a community. Nothing else in their life is currently giving them those things.

The Techno-Utopian Failure To Include

The second loud answer comes from the class of people who have the actual power to change the material conditions, and it is arguably worse because it pretends to be helpful.

It is the promise that a small, all-seeing class of technologists will solve everything. AI will generate so much wealth that a universal basic income or universal basic wealth will rain down and everyone will be fine. Trust the billionaires. The abundance is coming. You do not need to understand any of this. Just wait.

The problem with this story is that nobody is building the thing underneath it. No retraining pipeline at any scale that matters. No political coalition that would pass UBI. No trust. And while those things are being not-built, the same class of people is calling regular humans a "useless class" in public and in private. Yuval Harari coined that phrase. Peter Thiel has publicly equivocated on whether the human species should continue. A meaningful fraction of the transhumanist faction of the AI industry has internalized a worldview in which most humans are along for the ride, to be supported by a kind of elite charity once the machines take over.

This is where the pipeline feeds itself. The techno-utopian class tells young people they will be useless. The doom movement tells them AI is the reason. Neither side offers the actual thing that would close the gap: high-quality applied AI literacy that turns the locked-out into hyperagents.

The longer that vacuum exists, the more the doom movement grows. The more it grows, the more violence happens. The violence then becomes evidence, in certain rooms, that AI is too dangerous to democratize. That reasoning completes the circle and makes the exclusion worse. Every loop of this cycle locks more young people further outside the thing that could have actually helped them.

We Build The Fleet, Not A Single Ark

The intervention that actually works is obvious once the pipeline is named. Close the exclusion. Give people the literacy, the tools, and the community they were told they would never get. Watch the recruitment funnel for doom collapse, because the anger has somewhere better to go.

Here is the shape of how AAS does that, and how we need other institutions to do it too. The Applied AI Society does not build the ark. We build the culture and the infrastructure so that every person who wants an ark can build their own. The difference matters. A single centralized ark requires everyone to trust one institution with their future, which is exactly the posture the current AI elite would love the public to adopt. A fleet of arks, each one owned by the person or community that built it, is how sovereignty actually scales. The paternalistic "we will save you" frame that some in the AI industry have floated is not our model. Nobody is saving anyone. People are building their own things with real help.

Three ingredients make fleet-building possible, and no existing AI institution is delivering any of them at scale:

High-quality, hands-on activation. Activation is the specific AAS word for the moment a person crosses from "I have heard about AI" to "I am using it and it is changing how I work." The generic phrase "upskilling" misses the psychological shift and the compounding that follows. Workshops, not blog posts. A person walks out of the room with a working Personal Agentic OS on their laptop, configured to their real work. The Supersuit Up workshop is the canonical path. It takes an afternoon. The effect on the person who completes one is significant enough that they stop feeling locked out, because they are no longer locked out. That is their ark of one, launched.

Life literacy alongside technical literacy. Applied AI multiplies what a human already brings. A person who has not done the work of knowing who they are, what they are building, and who they are building it with cannot get full leverage out of any tool. Technical literacy without life literacy produces burnout at 100x speed. We teach both.

Sovereign tools and sovereign patterns. A Personal Agentic OS that a person owns and runs on their own machine is a different thing from a subscription to a hyperscaler product. Ownership of your context, your skills, and your workflow is what makes the literacy compound. The doom movement is rightly suspicious of rented sovereignty, and the suspicion is warranted. We offer an alternative architecture that stays with the builder.

Underneath all three: a culture of ark builders teaching each other. One person's Jarvis skill file is someone else's starting point. One chapter leader's workshop playbook becomes another chapter's scaffold. One small business's AI transformation is a reference implementation for the next. The fleet grows faster when every ark built adds to the shared infrastructure for the next ark. This is the community that matters, and building it is most of what AAS actually does day to day.

See Inclusive Technological Advancement for the design principle underneath this. The worth of AI progress is measured by how fast the floor rises, not by how far the frontier advances for those already at it.

Arks At Every Scale

The ark you build is yours to scope. The same activation, literacy, and sovereign-tool pattern scales from the smallest ark to the largest. We help people choose which scale matches their calling and then build the thing.

Ark of one. The Jarvised individual. Own context, own tools, own compounding. This is the entry point and the floor of the fleet. Get your Personal Agentic OS running and you are on the ascending side of the elevator economy. Most people never need to scale beyond this to radically change their life.

Ark for a team. A founder and co-founder, a family, a creative partnership, a small practice. Shared context, shared skill files, coordinated activation. The team that operates on shared agentic infrastructure compounds together, which is how the Ramp case at a larger scale is already playing out inside fast-moving orgs.

Ark for a community. A local chapter, a church, a guild, a neighborhood. Collective activation, civic work, service for a shared geography or mission. The AAS chapter leader playbook is how we help people build this scale of ark.

Ark for a business. A company structured for the AI-native economy. Every role with a Jarvis. Shared skill library. Agentic infrastructure. The Sovereign Agentic Business OS describes the pattern. This is the scale at which economic capacity gets compounded for employees, customers, and the surrounding community all at once.

Ark for a city. Civic infrastructure that treats every resident as an ark builder in the making. Real partnerships between local government, chapters, schools, and businesses. This is where the elevator economy can be corrected at the scale that actually moves political conditions.

Each scale of ark is an expression of the same core pattern. Each one is sovereign. Each one contributes back to the shared culture of ark building for the next builder. No single ark has to carry everybody, because there is no single ark. That is the whole point.

What This Means For A Young Person Reading This

If you are angry about the economy, good. Keep the anger. Use it.

If you are being told that AI is about to kill everyone and the righteous move is to sabotage the companies building it, that framing is a trap laid by people with their own funding incentives. It will not make your rent cheaper. It will not get you a job. It has a real probability of sending you to prison over something you did not fully understand. The people at the top of that movement are not the ones getting arrested.

If you are being told to trust the billionaires, the abundance is coming, a universal basic income will save you, that is a different trap. Nobody is building the thing underneath that promise. The same people making the promise have already decided, in private, that you might be part of the "useless class." That is not where your future lives either.

Your future lives in the hands that can operate applied AI at a level most people have not yet reached. That level is learnable. The tools are available. The literacy is open and free. We publish the workshops. We run the community. We will help you get there.

Get Jarvised. Come to a Supersuit Up workshop. Join the community. Read Your Two Futures for the stakes of the decision you are about to make. Then get to work.

Uselessness is a function of literacy and infrastructure, not a property of a person. Build your ark first. Then help the people you love build theirs.

Our whole organization exists to help people get activated and empowered for service of humanity as fast as possible. That is the work. Every person we Jarvis adds one more vessel to the fleet. The fleet is the ark.

A Note To Our Peers In The AI Field

The doom movement and the techno-utopian movement share a failure of imagination. Both believe, in different directions, that ordinary humans are the problem. One believes humans will be destroyed by the tools. The other believes humans will be made obsolete by the tools. Both answers treat humans as passive participants in a story written by the people building the infrastructure.

We reject that story. The entire point of applied AI, when it is done with any decency, is to amplify what humans can do. That requires treating humans as capable of the full amplification. Humans are the point of the story.

The violence is downstream of the exclusion. Every firebomb thrown in 2026 was incubated in a young mind that had been given no real path into the AI economy and every reason to believe it was being held outside the gates on purpose. The industry has the money to close that gap. What it lacks is the will to prioritize it.

If you are building AI, build tools your grandmother, your neighbor, and the 20-year-old trying to afford rent in your city can all actually use. Fund the literacy. Fund the community. Fund the workshops. The counter-movement to stop-AI violence is a generation that feels included in what comes next. Better security at the data centers is a downstream consequence of getting that wrong.

Fund ecological regeneration with the same urgency. The note at the councilman's shot-up house was "No Data Centers," and anyone who treats that as pure NIMBY rage is not listening. The AI industry is siting enormous energy and water infrastructure into communities that already felt abandoned by every previous wave of industrial decision-making. A data center with a restoration agenda for the watershed, the grid, and the neighborhood around it is a different political object than one without. We are going to find out which kind we built when the backlash matures. The right answer is the one where the industry led, not the one where the industry was forced to retreat.

The good things we believe about AI cannot be said forcefully enough if the inclusion work and the regeneration work are not also happening. Promises about AI-driven abundance land differently when people feel the promise in their actual lives.

Further Reading

- Hyperagency: What it looks like when a human wraps themselves in AI systems that amplify everything they already bring.

- The Hyperagency Gap: The defining inequality of the AI era. Closing it is our core mission.

- Inclusive Technological Advancement: Rising floors, not only advancing frontiers.

- Your Two Futures: The personal-level version of the fork.

- RIP To The Career Ladder: The labor-market reality underneath the anger.

- The Applied AI Canon: What we believe.

- Supersuit Up Workshop: The hands-on path into the third path.

- Garry Tan: From AI Doomerism to Molotov Cocktail: The reporting on the firebombing pipeline.