# Applied AI Society Documentation — Full Content

> This file contains the full text of every page in the Applied AI Society docs.

> Generated automatically at build time.

---

# Co-Steward With Us

URL: https://docs.appliedaisociety.org/docs/about/co-stewardship

# Co-Steward With Us

*The most powerful way to partner with the Applied AI Society is to co-maintain the truth.*

---

## The Model

The Applied AI Society maintains an open source knowledge base of playbooks, concepts, frameworks, and workshop formats for applied AI education. This is not a static textbook. It is a living, version-controlled commons that evolves every week based on what practitioners and educators are learning in the field.

We do not need every organization to become an AAS chapter. We need organizations to co-steward the shared truth about what works.

The metaphor: Google, Amazon, and Microsoft compete fiercely. But they all contribute to the Linux Foundation because every data center runs Linux. The shared infrastructure benefits everyone. Applied AI education is the same. The playbooks for how to teach people to thrive in the applied AI economy are public goods. They should be maintained like public goods: openly, collaboratively, and with real accountability to quality.

## What Co-Stewardship Looks Like

### Share Your Playbooks

If your organization runs AI workshops, hackathons, mentor networks, skill trees, or any structured learning program, your playbooks belong in the commons. Not locked in a Google Drive. Published, version-controlled, and available for anyone to fork, adapt, and improve.

What we are looking for:

- **Workshop formats.** How do you run your events? What works? What did you try that failed? The [Personal Agentic OS Workshop playbook](/docs/playbooks/practitioner/training-the-workshop) is an example: continuously updated based on real sessions, honest about what went wrong, and immediately usable by anyone who wants to run one.

- **Curriculum and skill trees.** How do you take someone from zero to building with AI agents? What is the progression? What are the prerequisites?

- **Expansion playbooks.** How did you scale from one location to five? What did the second chapter need that the first one did not?

- **Concepts and frameworks.** Have you coined a term or developed a mental model that helps people understand applied AI? Contribute it. Name it. Let the community build on it.

### Co-Maintain What Exists

Contributing a playbook once is valuable. Co-maintaining it over time is where the real leverage appears.

Applied AI moves fast. A workshop format that worked in January may need updates by March because the tools changed. A concept page that was accurate last month may need a new section because the landscape shifted. [Compounding docs](/docs/concepts/compounding-docs) only compound if they stay current.

Co-stewardship means:

- Filing issues when something is outdated or wrong

- Submitting updates based on your own experience running the playbook

- Adding lessons learned from your community to the shared knowledge base

- Keeping the signal high and the noise low ([signalmaxxing](/docs/concepts/signalmaxxing) applies to docs, not just feeds)

### Run the Playbooks in Your Community

The best way to improve a playbook is to run it. Take the [Personal Agentic OS Workshop format](/docs/playbooks/practitioner/training-the-workshop), run it with your community, and report back what worked and what did not. Take the [event formats](/docs/playbooks/chapter-leader/event-formats), adapt them to your audience, and share what you learned.

Every community is different. What works for business owners in Austin may need adjustments for engineering students in Milwaukee or artists in Los Angeles. Those adjustments are the contribution. They make the playbooks more universal and more useful for the next community that picks them up.

## Who This Is For

- **University AI clubs** that have built learning programs and want to share them with a broader network

- **Community organizations** running applied AI events in their city

- **Corporate training teams** that have developed internal AI education and are willing to open source parts of it

- **Individual practitioners** who have developed workshop formats, frameworks, or curricula worth sharing

- **Anyone** who believes applied AI literacy is a public good and wants to help maintain it

## What You Get

This is not a one-way contribution. Co-stewards get:

- **Access to the full commons.** Every playbook, concept, framework, and workshop format that every co-steward has contributed. Your organization benefits from the collective experience of every community in the network.

- **Visibility.** Your organization and contributors are credited in the docs. When someone runs your playbook in another city, they know where it came from.

- **Network.** Connection to a growing network of educators, practitioners, and community builders who are all working on the same problem from different angles.

- **Feedback loops.** When other communities run your playbook and improve it, those improvements flow back to you.

## How to Start

1. **Look at what exists.** Browse the [playbooks](/docs/playbooks), [concepts](/docs/concepts), and [event formats](/docs/playbooks/chapter-leader/event-formats). See what is already documented and where the gaps are.

2. **Share what you have.** Email your playbooks, skill trees, workshop formats, or curriculum to us. We will work with you to integrate them into the commons in a way that is useful to everyone.

3. **Run what exists.** Pick a playbook and run it in your community. Report back with what worked and what needs updating.

4. **File issues.** Found something outdated or wrong? Open an issue. That is a contribution.

Reach out via the [contact page](/docs/contact) or join the [Discord](https://discord.gg/K7uWJBMFaN) to get started.

## Current Co-Stewards

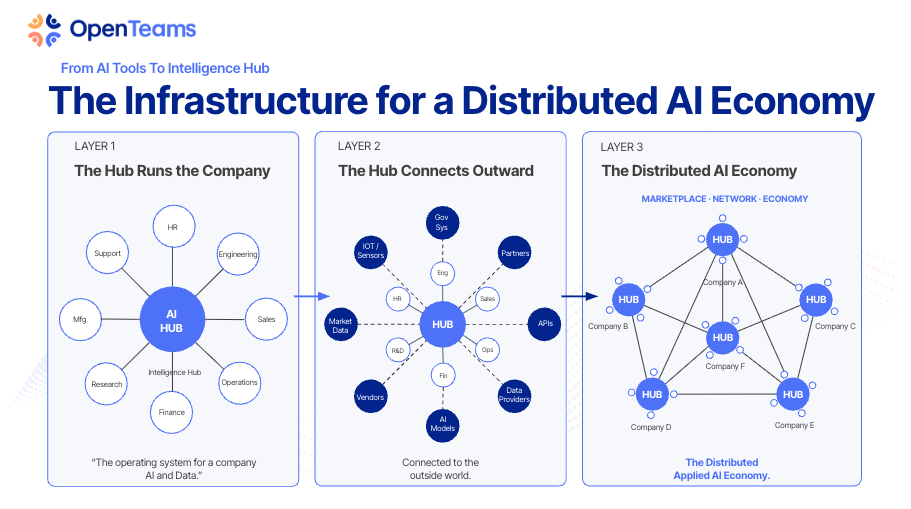

- **[OpenTeams](https://openteams.com/)** and **[Open Technology Incubator](https://otincubator.com/)**: Founding sponsors. Building the infrastructure layer for applied AI and open source.

- **[Milwaukee AI Club](https://milwaukeeaiclub.com/)**: 500+ member student-led AI organization across 5 Midwest universities. NVIDIA expansion partner. Contributing mentor network playbooks and skill trees.

- **[Applied AI Institute for Europe](https://appliedai-institute.de/)**: European applied AI research and education institute. Bridging applied AI literacy across the Atlantic.

*This list grows as more organizations contribute to the commons.*

---

## Further Reading

- [Compounding Docs](/docs/concepts/compounding-docs): Why every document contributed to the commons makes the whole system smarter

- [Signalmaxxing](/docs/concepts/signalmaxxing): Keeping the knowledge base high-signal

- [Why Field Notes](/docs/philosophy/why-field-notes): Why living field notes beat static textbooks

- [Truth Management](/docs/truth-management): The discipline of maintaining shared truth

- [Permissionless Knowledge](/docs/concepts/permissionless-knowledge): Making expertise accessible without requiring anyone's calendar

- [The Mission Harness](/docs/concepts/mission-harness): The infrastructure of shared purpose that co-stewardship enables

---

# What is the Applied AI Society?

URL: https://docs.appliedaisociety.org/docs/about

# What is the Applied AI Society?

## The Core Idea

The Applied AI Society is a 501(c)(3) nonprofit that champions [applied AI literacy](/docs/applied-ai-literacy) as the path to shortening the time for young people to get their first applied AI money-making opportunity.

That's it. That's why we exist. The path from "I don't know how to make money in this economy" to "I got my first applied AI opportunity" is too long, too confusing, and too lonely. Most people don't even know what's possible. We build the literacy that makes the path visible, then we make it shorter.

## The Moment We're In

[AGI is effectively here](/docs/concepts/effective-agi) for anyone who knows how to wield it. A single person with the right system can do what used to require a team of ten. The bottleneck is no longer the technology. It is the human.

This is creating a split. Some people are compounding their capabilities faster than at any point in history. Others are watching their skills erode in real time. We call the people going up [hyperagents](/docs/concepts/hyperagency): humans who have wrapped themselves in AI systems that amplify their unique capabilities, judgment, and vision. The economy is diverging into hyperagents and everyone else.

AAS exists to help as many people as possible experience hyperagency. The tools are accessible. The knowledge is available. What most people lack is the path: the literacy, the community, and the practical guidance to suit up. That is what we build.

New roles are emerging as quickly as old ones are shrinking: AI implementation specialists, automation architects, agent developers, fractional AI executives. Companies across every industry are desperate for people who can actually apply AI. These are six-figure roles that barely existed two years ago. We're documenting them as they form: [see the full list →](/docs/roles)

Nobody has this figured out. So let's all agree nobody has this figured out, and let's share notes.

For the full picture of the urgency and what applied AI actually means, read **[The Writing on the Wall](https://digitalcommons.humboldt.edu/digitallab/13/)** by Ron Roberts and Gary Sheng.

## Founded By

Applied AI Society was founded by Gary Sheng with Travis Oliphant (creator of NumPy, SciPy, cofounder of Anaconda) as founding advisor. Gary brings deep experience in community building, product, and applied AI implementation. Travis brings decades of building open-source tools that power modern computing and data science.

They share the same conviction: young people have real AI fluency but no clear path to turn it into a living. The Society exists to fix that.

## What We Are

The Applied AI Society is a 501(c)(3) nonprofit helping people and organizations transition prosperously into the applied AI economy. Our focus is on college-aged students transitioning into the workforce, but we're open to all professionals.

Through hyperlocal chapters led by young leaders, we create spaces where the next generation of applied AI practitioners learns by doing. We host events where real people share how they're actually making money: consulting, startups, workflow automation, agentic AI products, freelance engineering, and more. We share open documentation and connect members with businesses that need their fluency.

We call the people who bridge the gap between AI capability and real-world implementation "applied AI practitioners." They help organizations actually use AI to better serve their customers and communities. That's the career path we're building together.

## What We Believe

At the heart of everything we do is a simple idea: AI should free people to do more meaningful work, not replace them. And the people it frees should own their tools, their data, and their future.

Some work requires a human soul: presence, judgment, taste, care, responsibility. Some work is necessary but doesn't carry that weight. Thinking machines exist to carry the second kind so humans can spend more time on the first.

That's the [Applied AI Canon](/docs/philosophy/canon). Efficiency is not the goal. More soul-requiring work is.

## Why Sovereignty Matters

There is a deeper layer to this mission that goes beyond jobs and literacy.

The biggest AI companies are building platforms designed to capture your data, your workflows, and your dependency. [The lock-in is coming](/docs/concepts/the-lock-in-is-coming). It is not a conspiracy. It is the structural incentive of every VC-backed hyperscaler: subsidize adoption, build dependency, monetize the captive audience. They start with the model, then build the harness, then capture your workflows and integrations. Each step up the stack owns more of your operations. Every major hyperscaler (OpenAI, Anthropic, Google, Microsoft) is converging on the same goal: owning your entire business. Not just your work life. Your code, your documents, your email, your calendar, your strategic thinking, your customer data, your workflows, your integrations. All flowing through their systems, all creating dependency that compounds until switching is unthinkable.

We believe people should own their own intelligence. Not rent it. Not subscribe to it. Own it. That means owning your [context lake](/docs/concepts/context-lake) (the knowledge base that makes your AI useful), owning your [harness](/docs/concepts/harness-engineering) (the system wrapped around the model), and keeping everything in portable, platform-independent files that you can take anywhere.

The [sovereignty stack](/docs/concepts/the-sovereignty-stack) maps every layer of your digital life, from the silicon in your computer to the content you publish, and identifies where you are dependent on someone who is not you. We are training builders who understand this stack and can help others achieve sovereignty at every layer.

Open source models are getting better every quarter. Open harnesses are maturing. The sovereign alternative is being built right now, piece by piece, by builders who believe people deserve to own their future. If we build it to be as easy and effective as the proprietary platforms, it is just a matter of time.

Anyone who wants a sovereign future should be part of this movement. We are training the builders of that future.

## Applied AI Literacy

Applied AI Society is a champion and leader in [applied AI literacy](/docs/applied-ai-literacy).

The gap isn't just that companies need implementation help. The deeper gap is that people don't know what's possible. The Mayor of Austin put it perfectly: "You say AI to people and their knee-jerk is 'we're gonna have more data centers.' They don't know what the application is."

Not understanding applied AI is the new "I don't know how to read." Applied AI literacy means understanding what AI can actually do for your business, your career, and your community. Not just knowing that AI exists, but knowing how to apply it to real problems you face today.

We're developing courses, frameworks, and resources to make applied AI literacy accessible to everyone. [Learn more about our approach to applied AI literacy →](/docs/applied-ai-literacy)

## How It Works

**Local communities.** Applied AI Society runs through communities in cities and on campuses. Sometimes that is a formal chapter. Sometimes it is a student group within an existing AI club. Sometimes it is three people who decide to host their first event and see who shows up. The format matters less than the outcome: people in a room, learning applied AI by doing it together.

**Events.** Every Applied AI Society event is an activation into the applied AI economy. Our flagship format, [Applied AI Live](/docs/playbooks/chapter-leader/applied-ai-live), brings together live players: practitioners who are rapidly evolving their techniques and excited to share field notes from the front lines. The audience doesn't just hear about what's possible. They get pulled into the current. We're also developing new formats like [Applied AI Office Hours](/docs/playbooks/chapter-leader/event-formats#applied-ai-office-hours) (where business owners get hands-on help from practitioners) and hackathons. [See all event formats →](/docs/playbooks/chapter-leader/event-formats)

**Field notes, not textbooks.** The site you're reading right now is a living field guide, not a static curriculum. In a field that changes weekly, textbooks are outdated before they reach the reader. Social media rewards hype over accuracy. We need a different model: [field notes](/docs/philosophy/why-field-notes) written by practitioners who are actually doing the work, source-controlled and continuously updated, honest about what we don't know yet.

[Roles](/docs/roles) document the careers emerging. [Concepts](/docs/concepts) name the frameworks practitioners are using right now: [context lakes](/docs/concepts/context-lake), [harness engineering](/docs/concepts/harness-engineering), [the sovereignty stack](/docs/concepts/the-sovereignty-stack), [zero-question assessments](/docs/concepts/zero-question-assessments), and many others. [Case studies](/docs/case-studies) show what the work actually looks like. [Playbooks](/docs/playbooks) capture how to run events, find clients, and build chapters. None of this is finished. All of it is evolving. These docs become source material from which chapter leaders and university partners worldwide can create their own derivative courses, tailored to their audiences. That's how education scales without becoming propaganda.

**One public community, many invite-only spaces.** Our [Discord](https://discord.gg/K7uWJBMFaN) is the single public community space where anyone can join, ask questions, share what they're working on, and connect across chapters. Beyond that, chapter leaders and practitioner groups run their own invite-only group chats (Signal, iMessage, Telegram, whatever works locally). These smaller spaces feel special because they are. You earn your way in by showing up, doing real work, and adding value. The public Discord is the front door. The invite-only chats are the living rooms.

**Sponsors, not gatekeepers.** Local businesses and AI companies sponsor chapters because they want access to AI-native talent. Sponsors fund the community. They don't control it.

## For Students and Emerging Practitioners

You already use AI every day. You prompt, you iterate, you build things your professors haven't seen yet. That's real fluency. The problem is there's no clear path from "I use AI" to "I get paid to apply AI."

Applied AI Society shortens that path.

You'll meet practitioners who are making money in applied AI right now. You'll hear exactly how they got their first opportunities. The paths are more varied than you think: workflow automation, AI consulting, building AI-native products, intrapreneurship inside existing companies, agent development, freelance engineering. [See the full landscape →](/docs/playbooks/practitioner/applied-ai-economy)

You'll build a portfolio of applied work and connect with organizations that need exactly what you know how to do.

Don't think of yourself as "I don't know anything." You're AI native. You can pick things up. You're flexible. That matters more than any credential right now. We're here to help you turn that fluency into your first opportunity.

## For Universities and Young Adult Organizations

Your students and young members are anxious about AI and their careers. They're right to be. The job market is shifting under their feet, and traditional curricula can't keep up with weekly model releases.

Applied AI Society gives young people a community where they can channel that anxiety into action. Our events draw participants from across departments and backgrounds (not just CS) because applied AI is cross-disciplinary. Business students, design students, liberal arts students all bring perspectives that make implementations better.

Bringing Applied AI Society to your campus does not require starting a new club from scratch. If you already have an AI club, we can support it with playbooks, shirts, small event budgets, and a connection to the national network. If you do not have one, we can help you start something lightweight. The barrier to entry is low. We provide the playbooks, the event formats, and the community infrastructure.

**Want to bring Applied AI Society to your university or organization?** [Get in touch →](/docs/contact), or explore a [university partnership →](/docs/university-partnerships).

## Agent File Standards

As AI agents become the primary way people interact with codebases, organizations, and each other, we need shared conventions for how agents find and understand information. AAS identifies emerging patterns in the agent tooling ecosystem and publishes lightweight specs so the community can build on shared foundations.

**[INTEGRATE.md](/docs/standards/integrate-md)** is a file format for teaching agents how to wire a library into an existing codebase. Instead of reading human-oriented docs and guessing, the agent reads INTEGRATE.md and executes the integration steps directly. [Read the spec →](/docs/standards/integrate-md)

**[ALIGN.md](/docs/standards/align-md)** is a file format for agent-readable alignment evaluation. Someone pastes your ALIGN.md into their agent and says "evaluate whether we should work together." The agent reads both parties' files and returns an honest assessment of fit. The goal: truncate the time between meeting someone and knowing what the first pilot project is. [Read the spec →](/docs/standards/align-md)

If you're considering working with us, check our ALIGN.md. If you publish your own, send it along and we can run bilateral evaluation before anyone takes a call.

[Browse all standards →](/docs/standards)

## Get Involved

- **Attend an event:** [See upcoming events →](https://appliedaisociety.org/events)

- **Start or join a community:** [Learn how →](/docs/playbooks/chapter-leader)

- **Present at an event:** [Presenter playbook →](/docs/playbooks/presenter)

- **Read the docs:** [Browse playbooks, standards, case studies, and philosophy →](/docs/philosophy)

- **Join the community:** [Join our Discord →](https://discord.gg/K7uWJBMFaN) to connect with practitioners and chapter leaders across cities. Follow [@AppliedAISoc on X](https://x.com/appliedaisoc) for updates.

---

# The Applied AI Literacy Earthshot

URL: https://docs.appliedaisociety.org/docs/applied-ai-literacy/earthshot

# The Applied AI Literacy Earthshot

## The Commitment

Applied AI Society is building the best open-source applied AI literacy source material in the world, co-created by the most trusted, most experienced applied AI practitioners in the world.

This is the foundation. Courses derive from it. Workshops derive from it. Tools derive from it. Translations derive from it. But the source material is the thing. It is the documented, version-controlled, co-created truth about what applied AI is, how to do it, and how to teach it. Everything else is downstream.

This is not a product. It is a mission. We are incorporating as a nonprofit organization, and this is what we exist to do.

## Why Source Material, Not Just Courses

Anyone can create a course. What the world lacks is a trusted, open, practitioner-vetted source of truth about applied AI that anyone can build on.

Think of it the way [Truth Management](/docs/truth-management) works inside an organization: the documented truth is the foundation that every human and every AI agent builds from. Without it, you get scattered assumptions, contradictory advice, and coordination failures. With it, you get aligned action at scale.

Applied AI literacy has the same problem at a global level. There are thousands of AI courses. There is no shared, open, practitioner-co-created foundation that everyone can trust, translate, fork, and build on. That is what we are creating.

The source material will be:

- **Open source.** Anyone can read it, use it, fork it, improve it.

- **Co-created by leading practitioners.** Not written by one person or one organization. Built with the people who are actually doing the work.

- **Version-controlled and living.** It evolves as the field evolves. It is built on real experiences, not speculation. [Read why we chose field notes over textbooks →](/docs/philosophy/why-field-notes)

- **Translated into every language.** The applied AI literacy gap is global. The source material must be too.

- **Accessible to everyone.** No paywalls on the source material itself. Ever.

## Why This Is the Greatest Need

[Applied AI literacy](/docs/applied-ai-literacy) is the defining gap of this moment. No institution is solving it at the scale and speed required. Universities are behind. Governments are behind. The market is moving faster than any single organization can keep up with.

This is why the source material must be open source. This is why it must be co-created. No single organization, including ours, has the full picture.

## How It Works

### Open Source, Co-Created

The source material is being built with leading applied AI practitioners from organizations including [OpenTeams](https://openteams.com/), [Applied AI Deutschland](https://www.appliedai.de/en/), and practitioners from the Applied AI Society community around the world.

It is open to feedback, contributions, and pull requests from anyone. If you are a practitioner doing this work, you have something to contribute. If you are an educator, a translator, a business leader who has applied AI and learned something, we want to hear from you.

The goal is not to create our curriculum. The goal is to create the world's foundational source material for applied AI literacy, and to steward it as a community.

### What Derives from the Source Material

**Courses.** Practical, project-based instruction built on top of the source material. Operated on a pay-it-forward model: suggested donation, free if you can't afford it, no cap if you want to help us reach more people.

**Workshops and events.** Chapter leaders and community organizers use the source material to run applied AI literacy programming in their cities and campuses.

**Tools.** Open-source educational technology, assessment tools, and resources that anyone can use, adapt, and improve.

**Translations.** Every framework, every guide, every resource, translated into as many languages as possible.

**Corporate programs.** Organizations use the source material to upskill their teams with applied AI capabilities.

### Pay-It-Forward Model

Courses and structured instruction built on the source material operate on a suggested donation model.

If you can afford the suggested donation, it helps us reach more people: more translations, more chapters, more events, more tools.

If you cannot afford it, the courses are free. No questions asked. Everyone deserves access to this knowledge.

If you want to donate beyond the suggested amount, every additional dollar goes directly toward expanding access. More translations. More communities reached. More practitioners trained. More open-source tools built.

There is no cap. If an organization wants to fund applied AI literacy for a thousand people, we will make it happen.

### Every Company Can Contribute

We want every company to want to be a part of this.

**Contribute to the source material.** Submit improvements, case studies, frameworks, and real-world examples from your organization's applied AI journey.

**Sponsor the mission.** Fund the creation of courses, translations, and tools that make applied AI literacy accessible worldwide.

**Help translate.** The source material needs to reach every language and every community. Translation is one of the highest-leverage contributions anyone can make.

**Build tools.** Open-source products, educational models, and tools that make the source material more accessible and more effective.

The vision is a gravitational center: the most trusted, most foundational source of truth about applied AI. Contributed to by practitioners and companies from every industry and every country. The more people who contribute, the better it gets for everyone.

## The Standard

Whoever defines what applied AI literacy means owns the conversation. We intend to define it, not by declaring ourselves the authority, but by building source material so good, so open, and so well-supported by real practitioners that it becomes the standard because it works.

This is a living body of work. It will evolve as the field evolves. It will be built on the real experiences of real practitioners, not on speculation about what people might need to know.

## Further Reading

**[The Writing on the Wall: The Rise of 'Applied AI' and the Life-or-Death Choice Every CEO Must Make Now](https://digitalcommons.humboldt.edu/digitallab/13/)** by Ron Roberts and Gary Sheng. The urgency behind the Earthshot: the numbers, the definition of applied AI, and why this is the greatest need.

## Get Involved

This is too big for any one organization. We need practitioners, educators, translators, companies, and community builders.

- **Contribute to the source material:** [GitHub](https://github.com/appliedaisociety)

- **Join the community:** [Discord](https://discord.gg/K7uWJBMFaN)

- **Partner with us:** [Contact](https://appliedaisociety.org/contribute)

- **Start a chapter:** [Chapter leader playbook](/docs/playbooks/chapter-leader)

We think this is the greatest need humanity has right now. Let's build this together.

---

# Applied AI Literacy

URL: https://docs.appliedaisociety.org/docs/applied-ai-literacy

# Applied AI Literacy

## What It Is

Applied AI literacy is the ability to understand what AI can do and to put it to work on real problems.

It's not about knowing that AI exists. Everyone knows that. It's not about being able to define "large language model" or "neural network." Applied AI literacy means you can look at a business process, a community need, or a career challenge and see where AI fits. You can evaluate tools, scope projects, ask the right questions, and build (or commission) real solutions.

Think of it this way: knowing that electricity exists didn't change anyone's life. Knowing how to wire a building, run a factory, or light a hospital did. Applied AI literacy is the wiring knowledge of the AI age.

## Why It Matters Now

We are in the middle of a flood.

Jobs are shifting faster than institutions can adapt. Information overload makes it harder to separate signal from noise. Deepfakes erode trust. The economy is splitting into a K-shape: those who can harness AI are accelerating, and those who can't are falling behind.

The numbers tell the story. AI computing demand has increased roughly one million times in the last two years ([NVIDIA GTC 2026 Keynote](https://blogs.nvidia.com/blog/gtc-2026-news/)). Over $150 billion in venture capital flowed into AI startups in 2025, the largest year of startup investment in human history. At least $1 trillion in AI infrastructure is being built out through 2027. This is not hype. This is capital being deployed at a scale that reshapes entire economies.

The Mayor of Austin captured the problem perfectly: "You say AI to people and their knee-jerk is 'we're gonna have more data centers.' They don't know what the application is."

That's the literacy gap. Most people, most businesses, and most governments have no mental model for what AI can actually do for them. They hear "AI" and think of robots, job loss, or science fiction. They don't think: "This could cut my invoice processing from three days to ten minutes" or "This could help my students get personalized feedback on their writing" or "This could help my city respond to constituent requests twice as fast."

Without applied AI literacy, people can't see the opportunities forming around them. They can't protect themselves from the risks, either. They're navigating a new landscape with an old map.

We are committed to building the best open-source applied AI literacy [source material](/docs/applied-ai-literacy/earthshot) in the world, co-created by leading practitioners. Courses, tools, and translations all derive from it.

## Learning to Apply AI Is the New Learning How to Read

Learning to apply AI is not "the new learning how to code." It is the new learning how to read. It is that foundational. Not knowing how to apply AI to your business, your career, your community is the new illiteracy.

The foundational model companies (OpenAI, Anthropic, xAI) are all building consulting arms to deploy AI inside companies. They are sending engineers directly into corporate offices. The most powerful AI companies on earth looked at the market and said: "Businesses can't figure out how to use this. We need to go in and do it for them."

But here is what they cannot scale: trust. Relationships are the bottleneck to applying AI, not compute, not models, not tokens. A trusted person who can sit down with a business owner, understand their situation, and actually help them. That is the job of the future. And no corporation can monopolize it, because trust is local, relational, and earned one conversation at a time.

Inference is becoming commoditized. LLMs are becoming interchangeable. But trusted applied AI practitioners are the scarcest resource in the market. You can do this work as a solo practitioner or with a very small team. The tools are accessible. The demand is effectively infinite. And the window is wide open.

## Who It's For

Applied AI literacy isn't just for engineers or tech workers. It's for everyone whose work and life are being reshaped by AI (which is everyone).

**Business owners** who need to know which AI tools are worth investing in and which are hype. Who need to scope AI projects, hire practitioners, and measure results.

**Engineers and developers** who need to move from traditional software to AI-native systems. Who need to understand agents, context engineering, and how to build things that actually ship.

**Students and early-career professionals** who need to turn their AI fluency into paying work. Who need to see the career paths that are forming and understand how to walk them.

**Government leaders and policymakers** who need to make decisions about AI adoption, regulation, and workforce development. Who can't afford to get this wrong for their communities.

**International communities** where the AI economy is arriving fast but the infrastructure, education, and support systems haven't caught up yet.

## Further Reading

**[The Writing on the Wall: The Rise of 'Applied AI' and the Life-or-Death Choice Every CEO Must Make Now](https://digitalcommons.humboldt.edu/digitallab/13/)** by Ron Roberts and Gary Sheng. Published in the Internet Journal / Humboldt State Digital Commons. A deep dive into the numbers behind the disruption, what applied AI actually is (and is not), how businesses go extinct in the AI economy, and the existential choice every organization is now making.

## How Applied AI Society Is Leading This

Applied AI Society isn't waiting for someone else to define what applied AI literacy looks like. We're building it.

**Through community.** Our hyperlocal chapters create spaces where people learn applied AI by doing it, not by reading about it. Events like [Applied AI Live](/docs/playbooks/chapter-leader/applied-ai-live) put real practitioners on stage sharing exactly how they apply AI to make a living. The audience doesn't just listen. They leave with something they can try on Monday.

**Through open documentation.** The [docs you're reading right now](/docs/about) are a living field guide to the applied AI economy. [Roles](/docs/roles), [playbooks](/docs/playbooks), [case studies](/docs/case-studies), and [concepts](/docs/concepts) that get updated as the field evolves. All open. All free.

**Through partnerships.** We're building a coalition with organizations that share this mission. [OpenTeams](https://www.openteams.com/) connects open-source talent with enterprise needs. Universities want programming that keeps pace with the real economy. City governments need workforce development that actually works. International partners are bringing applied AI literacy to communities around the world. Together, we can reach further than any one organization could alone.

**Through standards and frameworks.** We're developing competency frameworks that help people and organizations understand what "good" looks like in applied AI work. Not certifications for the sake of credentials, but practical benchmarks that map to real skills and real outcomes.

## What's Coming

We're developing courses, frameworks, and resources to make applied AI literacy accessible and practical. This includes:

- **Courses** that teach applied AI skills through real projects, not toy examples

- **Competency standards** that help individuals and organizations measure readiness

- **Corporate programs** that help businesses upskill their teams with applied AI capabilities

- **Community resources** that chapter leaders can use to run literacy-focused events

This work is underway and we'll share more as it takes shape. If you want to help build it, we want to hear from you.

## Get Involved

Applied AI literacy is too important to leave to any one organization. We need practitioners, educators, business leaders, and community builders working on this together.

- **Join the community:** [Discord →](https://discord.gg/K7uWJBMFaN) | [X →](https://x.com/appliedaisoc)

- **Attend an event:** [Upcoming events →](https://appliedaisociety.org/events)

- **Start a chapter:** [Chapter leader playbook →](/docs/playbooks/chapter-leader)

- **Partner with us:** [Get in touch →](https://appliedaisociety.org/contribute)

Nobody has applied AI literacy figured out. That's exactly why we need to work on it together.

---

# Applied AI Week

URL: https://docs.appliedaisociety.org/docs/applied-ai-week

# Applied AI Week

Coming Soon

Applied AI Week

The applied economy deserves a week of its own.

## Austin, Texas, 2026

Starting in Austin, the applied AI capital of the world. Already expanding to cities around the world.

Most people still think of AI as something that happens in data centers or research labs. But the real story is playing out in businesses, classrooms, and organizations across every sector. The applied economy is not coming. It is here.

And it deserves a full week dedicated to helping people understand how to upskill into it, gathering the best thought leadership and practitioners all in one place.

## What to Expect

- **Thought leadership** from the people actually building in applied AI right now

- **The best leaders and practitioners** gathered in one place

- **Austin, Texas** headquarters for the movement

- **Global expansion** bringing the format to cities around the world (already active in Bordeaux, France and growing)

## Stay in the Loop

If you want to stay ahead of everything happening in the applied AI economy, especially the headquarters event in Austin, subscribe to our Substack. We will share dates, lineup, and location details here first.

---

# Generating Assets with AI

URL: https://docs.appliedaisociety.org/docs/brand/ai-generation

# Generating Brand Assets with AI

How to use Gemini, Midjourney, DALL-E, or other image generators to create on-brand graphics for Applied AI Society materials.

---

## Master Prompt

Copy this as your base. Attach the style reference images below. Then add what specific asset you need at the end.

```

I need to create a graphic for "Applied AI Society," a community for AI practitioners.

BRAND STYLE:

- Warm, inviting, optimistic, nature-inspired

- Think Windows XP rolling hills, pastoral sunrise vibes

- NOT techy, NOT futuristic, NOT sci-fi, NO circuits/grids/nodes

- Feels human, grounded, welcoming (like a positive natural progression)

COLORS (use these exact hex codes):

- Orange #E67B35 (primary brand color)

- Yellow/Gold #FFC14D (gradients, sun, accents)

- Cream #FAF7F1 (warm backgrounds, never pure white)

- Olive Green #5B6E4D (hills, nature elements)

- Dark #1A1A1A (text, contrast)

STYLE KEYWORDS: soft gradients, minimal, layered hills, sunrise glow, stylized/illustrated (not photorealistic)

BACKGROUND STYLE: Warm cream with subtle paper/linen texture, soft natural lighting

like watercolor paper. NOT pure white, NOT flat digital background.

TYPOGRAPHY (if generating text):

- Font: Bold geometric sans-serif (Helvetica Neue Bold, Arial Bold)

- Main text color: Dark #1A1A1A

- Highlighted phrases: Orange #E67B35

- Style: Clean, professional, good letter spacing, readable

- Use curly quotation marks (" ")

AVOID: circuits, grids, nodes, networks, neon, glowing tech, metallic, cold, clinical, dystopian, sci-fi, pure white backgrounds, decorative/script fonts for main text

[ATTACH: rolling-hills.png and sun.png as style references]

---

WHAT I NEED: [Describe the specific asset here, e.g., "A 16:9 banner with hills at bottom, sunrise glow at top, space for text overlay"]

```

---

## Style References

When prompting AI image generators, attach these as style references:

### Paper Background Texture

**Download for reference:** [paper-background.png](/img/paper-background.png)

**Style notes:** Soft cream/off-white with subtle paper grain, organic texture, warm natural lighting feel

### Rolling Hills

**Download for reference:** [rolling-hills.png](/img/rolling-hills.png)

**Style notes:** Soft gradients, olive greens (#5B6E4D), gold accents (#E8B923), layered hills, stylized/not photorealistic

### Sun

**Download for reference:** [sun.png](/img/sun.png)

**Style notes:** Simple radial shape, gold center (#E8B923), cream/warm glow, minimal details

### Logo Examples

---

## Color Palette for Prompts

Include these colors in your prompts:

| Name | Hex | Description |

|------|-----|-------------|

| Orange | #E67B35 | Primary brand color |

| Yellow/Gold | #FFC14D | Gradients, sun elements |

| Cream | #FAF7F1 | Warm backgrounds |

| Olive Green | #5B6E4D | Hills, nature elements |

| Dark Text | #1A1A1A | Contrast elements |

---

## Full Example: Quote Graphic

Here's a complete, copy-pastable prompt for creating a quote graphic with a person photo:

```

Create a quote graphic for social media.

LAYOUT:

- Landscape format (16:9 or similar)

- Person photo on the LEFT side (I am attaching their photo)

- Quote text on the RIGHT side

- Subtle rolling hills silhouette along the bottom edge

BACKGROUND:

- Warm cream/off-white with subtle paper texture (like watercolor paper or linen)

- Soft, organic grain. NOT flat digital white

- Very faint warm tones, natural lighting feel

BOTTOM ELEMENT:

- Stylized rolling hills silhouette in olive green (#5B6E4D) with gold (#FFC14D) accents

- Hills should be at the BOTTOM of the image, BEHIND/BENEATH any person

- Person should appear in front of the hills (hills are background layer)

- Hills should be subtle, fading into the background. Not dominant

- Soft gradients, layered depth

STYLE:

- Warm, inviting, professional

- Nature-inspired, pastoral vibes (think Windows XP wallpaper but warmer)

- NOT techy, NOT futuristic, NO circuits/grids/nodes

- Soft and organic, not sharp or digital

COLORS:

- Background: Cream #FAF7F1 with paper texture

- Hills: Olive #5B6E4D with gold #FFC14D highlights

- Any accent elements: Orange #E67B35

TEXT (generate this in the image):

- Main quote in a bold, geometric sans-serif (like Helvetica Neue Bold or Arial Bold)

- Quote text color: Dark #1A1A1A

- Key phrase can be highlighted in Orange #E67B35

- Attribution/name in slightly smaller text below the quote

- Use curly quotation marks (" ")

TYPOGRAPHY STYLE:

- Clean, professional, modern but warm

- Good letter spacing, readable

- NOT decorative or script fonts for the main quote

LAYOUT for text:

- Quote on the RIGHT 60% of the image

- Person photo on LEFT 40%

[ATTACH:

- paper-background.png (background style reference)

- rolling-hills.png (hills style reference)

- Photo of the person to feature

]

```

---

## Example "WHAT I NEED" Additions

**Start with the Master Prompt above**, attach the style references, then replace `[Describe the specific asset here]` with one of these:

### Social Media Banner

```

A wide banner (16:9) with stylized rolling green hills in the foreground

and a warm sunrise in the background. Space for text overlay in the center.

```

### Event Graphic Background

```

Abstract warm background with subtle rolling hill shapes at the bottom edge.

Sunrise glow from the top. Lots of space for text overlay. Vertical format (9:16).

```

### Icon

```

A simple, minimal icon representing [TOPIC]. Rounded edges, soft, approachable.

Single color (orange #E67B35) on transparent background. 256x256px.

```

### Presentation Slide Background

```

Subtle background for a presentation slide (16:9). Cream base with very faint

olive green hill silhouettes at the bottom edge. Minimal, should not distract from text.

```

### Social Post Square

```

Square image (1:1) for Instagram/LinkedIn. Rolling hills composition with

sunrise glow. Leave center area clear for text overlay.

```

---

## Need Help?

If you're creating materials for an official Applied AI Society event or chapter, reach out to the team for guidance or custom assets.

---

# Brand Guidelines

URL: https://docs.appliedaisociety.org/docs/brand

import { ColorSwatch, ColorSwatchRow, InlineColor } from '@site/src/components/ColorSwatch';

# Brand Guidelines

The Applied AI Society visual identity. Warm, natural, and human.

---

## Philosophy

Our brand is intentionally **not techy**. No circuits, no glowing grids, no dystopian sci-fi aesthetics. When people imagine the future of AI, they often picture something cold and mechanical. We want the opposite.

Think Windows XP's rolling hills. There's a reason that became iconic. Humans are naturally primed to feel good about green pastures and blue skies. It's calming. It feels like home.

The Applied AI Society represents a **natural, positive progression** for humanity. AI isn't something to fear. It's a tool that helps people do meaningful work. Our brand should feel like that: grounded, optimistic, and welcoming.

**Why orange?** It stands out. It's warm without being aggressive. And it's having a moment in fashion and design. It catches the eye without screaming.

---

## Colors

| Color | Hex | Use |

|-------|-----|-----|

| Orange | | Primary brand color, CTAs, links |

| Yellow | | Gradients, accents |

| Cream | | Backgrounds (warmer than white) |

| Olive | | Nature elements, secondary accents |

| Text Dark | | Headings, body text |

### Background Style

Our backgrounds are **warm cream with subtle paper texture**. Not pure white, not flat. Think watercolor paper or soft linen grain with natural diffused lighting. This gives graphics an organic, inviting feel rather than a cold digital look.

**Download:** [paper-background.png](/img/paper-background.png)

**Logo Gradient:** → (used on "SOCIETY" wordmark)

---

## Typography

| Element | Font | Notes |

|---------|------|-------|

| "APPLIED AI" wordmark | Helvetica Neue Bold | Or Arial Bold |

| "Live" script | Brush script | Custom, hand-drawn feel |

| Headings | Space Grotesk | Technical but friendly |

| Body text | DM Sans | Clean, readable |

```css

/* Google Fonts import */

@import url('https://fonts.googleapis.com/css2?family=DM+Sans:wght@400;500;600&family=Space+Grotesk:wght@600;700&display=swap');

```

---

## Logos

### Main Wordmark

**Download:** [Light SVG](/img/logo-stacked.svg) | [Light PNG](/img/logo-stacked.png) | [Dark SVG](/img/logo-stacked-dark.svg) | [Dark PNG](/img/logo-stacked-dark.png)

### Applied AI Live (Events)

**Download:** [Light SVG](/img/applied-ai-live.svg) | [Dark SVG](/img/applied-ai-live-dark.svg)

---

## Graphic Elements

### Rolling Hills

Stylized green hills representing growth and grounded optimism.

**Download:** [rolling-hills.png](/img/rolling-hills.png)

### Wildflowers

Yellow and orange wildflowers are a great accent in brand illustrations. They echo our primary orange and gold palette and add warmth, life, and natural beauty to landscape scenes. Use them in pastoral compositions alongside rolling hills and sunrises.

*Example: Retro-style flyer with orange and yellow wildflowers in the landscape.*

**Download:** [wildflowers-flyer.png](/img/wildflowers-flyer.png)

### Sun Icon

Warm sun representing energy and new beginnings.

**Download:** [sun.png](/img/sun.png)

#### Animated Sun (React + Framer Motion)

Drop this into your React app for a slowly rotating sun. Requires `framer-motion`.

```tsx

import { motion } from "framer-motion";

{/* Animated Sun */}

```

**Notes:**

- Uses Tailwind CSS classes for sizing and positioning

- `mix-blend-multiply` blends nicely with cream backgrounds

- 120-second rotation for subtle, non-distracting movement

- Adjust `top`/`right` values to position in your layout

---

## Favicon

All Applied AI Society web properties use the **sun icon** as their favicon. The sun is one of our core brand motifs representing energy, optimism, and new beginnings. It works well at small sizes and is immediately recognizable.

The favicon uses the brand gradient ( → ) in SVG format for crisp rendering at any size. SVG favicons are supported by all modern browsers.

**Download:** [favicon.svg](/img/favicon.svg)

**Implementation:**

- **Docusaurus sites**: Set `favicon: 'img/favicon.svg'` in `docusaurus.config.ts`

- **Next.js sites**: Place `icon.svg` (or `favicon.svg`) in `app/` or `public/` and reference via metadata

- **Static HTML**: ``

---

## Usage Rules

### Do

- ✅ Use official assets from this page

- ✅ Maintain clear space around logos

- ✅ Use appropriate color version for background

- ✅ Keep backgrounds warm (cream > pure white)

### Don't

- ❌ Recreate logos from scratch

- ❌ Change logo colors

- ❌ Stretch or distort

- ❌ Add shadows, outlines, or effects

---

## Generate Your Own Assets

Need custom graphics? See [Generating Brand Assets with AI](/docs/brand/ai-generation) for prompts and style references to create on-brand images with Gemini, Midjourney, or other tools.

---

# Gary Sheng

URL: https://docs.appliedaisociety.org/docs/case-studies/gary-sheng-media-automation

# Gary Sheng

*Building AI-Powered Content Tools for Media Companies*

---

Gary Sheng has been quietly building custom AI tools for media clients since early 2025. Not platforms. Not SaaS products. Specific tools that solve specific problems for specific teams, then iterating based on what those teams actually need next.

The pattern is always the same: sit with the client, understand what their team does every day, identify where they're losing time or quality, and build something that fixes it. The tools aren't theoretical. They're in production, used daily by content teams generating tens of millions of impressions.

---

## Client A: A High-Volume Media Company

The first major engagement was with a media company that operates multiple brands and runs a team of content creators posting across platforms at high volume. Their existing workflow had several pain points that were obvious candidates for automation.

### The Meme Generator

The team was creating image-based content (bold text overlaid on images) manually. Each piece required finding or creating an image, formatting text, and exporting. Gary built a custom meme generator that went through multiple iterations with the team before landing on a version they use every day.

"We went through several versions," Gary says. "You can't just ship v1 and walk away. The team has to live with it, tell you what's annoying, what's slow, what doesn't match the brand. Then you iterate until it disappears into their workflow."

### The Video Reformatter

The team had a painful process: find a video on X (Twitter), download it (which is never straightforward), add a caption, and repost it as a Reel on Instagram. Every step had friction. Gary built a mobile-friendly app where anyone on the team can paste an X video URL, add a caption, and get a ready-to-post video out the other end.

"That one was pure workflow automation," Gary says. "No AI magic needed. Just removing every unnecessary step so the team can focus on the editorial judgment of what to post, not the mechanics of posting it."

### The Image Stylizer

This was the tool that changed what the team thought was possible. Gary built an app that takes any reference image and transforms it into a specific visual style that the team battle-tested together. One of their brands uses a courtroom-sketch aesthetic. Every image from Getty or news sources gets stylized into that look. The result is instantly recognizable content with a distinctive brand identity, produced at volume.

"The stylizer wasn't automating an existing process," Gary says. "It was something they weren't doing before because it would have been impossibly expensive and slow. Once they had it, they realized they could create a visual identity that's theirs. Now if you've seen their content a couple times, you recognize it immediately."

The team uses it constantly. It's become foundational to how they create content across multiple brands.

### The Results

The combination of these tools produced the media company dream: better quality, more distinctive brand identity, and higher volume. The team went from spending significant time on production mechanics to spending almost all their time on editorial decisions (what to post, what angle to take, what's worth amplifying).

The content reaches tens of millions of impressions. The tools didn't create the audience. The audience was already there. But the tools made it possible to serve that audience with better, more consistent, more visually distinctive content at a pace that would have required a much larger team.

---

## Client B: A Podcast Content Strategist

The second client is a content strategist working in podcasts. Different industry, different daily workflow, but the same underlying pattern: identify what the person does every day, find the friction, build tools that remove it.

Gary built a suite of tools that make the strategist's work faster and more consistent. The specifics differ from Client A (podcasts have different production needs than social media content), but the approach is identical: start with the existing workflow, automate the tedious parts, then watch as the client's imagination opens up about what else is possible.

"That's the progression every time," Gary says. "First you automate what they already do. Match the quality, save the time. Then naturally they start saying, 'What if it could also do this?' Their imagination opens up because they're no longer buried in production work. They can think about what's next."

---

## How He Works

Gary charges $175/hour and works directly with the client and their team. No handoffs. No requirement documents that get passed to a separate engineering team. He sits in the room (or on the call), understands the problem, and often has a working version within the same session.

"If I was outsourcing this to a software engineer, I'd still have to translate the client's needs into specs, wait for a build, review it, send feedback, wait again," he says. "Instead I'm building it on the spot. The client sees it working in real time. We iterate together. By the end of the session they have something they can use."

This is closer to the forward deployed engineering model that companies like Palantir use: collapse the distance between the person who understands the problem and the person who can build the solution. In Gary's case, they're the same person.

### The Stack

Gary builds primarily with:

- **Claude Code** for rapid development and iteration

- **Remotion** for video and image generation tooling

- **Next.js / React** for web-based tools the team can access on any device

- **Google Gemini** and **OpenAI APIs** for image generation and stylization

- Custom scripts and automation pipelines tailored to each client's workflow

The tools aren't complex. They're specific. Each one does exactly what the client's team needs, nothing more. That specificity is what makes them actually get used instead of gathering dust.

---

## The Pattern

Across both clients, the progression follows the same arc:

1. **Automate existing workflows.** Start by doing what the team already does, just faster and with less friction. This builds trust and saves immediate time.

2. **Match or exceed quality.** The output has to be at least as good as what the team was producing manually. If it's worse, they won't use it.

3. **Open the imagination.** Once the team isn't buried in production work, they start seeing possibilities they couldn't before. New kinds of content. New visual identities. New formats that would have been too expensive to try manually.

4. **Iterate and expand.** The first tool leads to the second. The second leads to the third. Each one compounds the team's capacity and the client's trust.

"Every company is a media company now," Gary says. "The ones that figure out how to produce better content, faster, with a more distinctive voice, are the ones that win. These tools aren't replacing the creative judgment. They're freeing the team to focus entirely on creative judgment."

---

*Gary Sheng is the founder of [Applied AI Society](https://appliedaisociety.org) and an applied AI practitioner specializing in media and content automation. He works directly with media companies and creative professionals to build custom AI tools that increase output quality and volume.*

*Connect with Gary on [X/Twitter](https://x.com/gaborsheng) or [LinkedIn](https://www.linkedin.com/in/garysheng/).*

---

# Case Studies

URL: https://docs.appliedaisociety.org/docs/case-studies

# Case Studies & Practitioner Profiles

Real projects, real practitioners, documented openly. Part of the [reality bank](/docs/philosophy/why-field-notes).

This section features three kinds of content: **project case studies** showing how AI was applied to solve a specific business problem, **practitioner profiles** showing how individuals are building careers in the applied AI economy, and **real life field notes** documenting what applied AI actually looks like in everyday environments (families, travel, education, personal life). All serve the same purpose: giving you a concrete picture of what this work actually looks like, so you can contribute your own field notes to the source material.

---

## Personal Transformations

| Case Study | Who | Summary |

|------------|-----|---------|

| [Tim Dort-Golts](/docs/case-studies/tim-dort-golts-personal-transformation) | Tim Dort-Golts | A non-technical business student rebuilds his entire personal and professional workflow with an AI agent |

---

## Practitioner Profiles

| Profile | Focus |

|---------|-------|

| [Rostam Mahabadi](/docs/case-studies/rostam-mahabadi) | AI agent building and consulting. Radical transparency as a sales strategy. 90-95% close rate. |

---

## Corporate Case Studies

| Case Study | Company | Summary |

|------------|---------|---------|

| [Ramp: Glass](/docs/case-studies/ramp-glass) | Ramp | 700 employees, 350 shared skills, and an internal AI suite that validates harness engineering, shared skill files, and sovereignty at corporate scale |

---

## Project Case Studies

| Case Study | Who | Summary |

|------------|-----|---------|

| [Gary Sheng: Media Automation](/docs/case-studies/gary-sheng-media-automation) | Gary Sheng | Building custom AI content tools for media companies: meme generators, video reformatters, and image stylizers driving tens of millions of impressions |

---

## What Applied AI Actually Looks Like in Real Life

*Coming soon.* Field notes from practitioners documenting how applied AI shows up in real-world environments beyond the office: families, travel, education, personal projects, community work. These are not polished case studies. They are honest observations from people living with these tools every day, contributed to the [source material](/docs/applied-ai-literacy/earthshot) so that others can learn from real experience.

Want to contribute? [Get in touch](/docs/contact).

---

## Submit Your Story

Are you doing applied AI work? We want to document it. Whether it's a project case study, your practitioner journey, or a real-life field note, reach out on [Discord](https://discord.gg/K7uWJBMFaN) or [GitHub](https://github.com/applied-ai-society).

---

# Ramp: Glass

URL: https://docs.appliedaisociety.org/docs/case-studies/ramp-glass

# Ramp: Glass

*700 employees. 350 shared skills. 6,300% usage growth. 1,500 apps shipped in six weeks. And they're just getting started.*

---

## The Story

Ramp is a financial payments infrastructure company. In January 2025, they told their entire company they would become the most productive company in the world. They had no plan for how to get there. What they had was a culture of velocity, a bias toward building, and leadership that treated AI adoption as an expectation rather than an experiment.

Eighteen months later, the numbers speak for themselves: AI usage up 6,300% year over year. 99.5% of the team active on AI tools. 84% using coding agents weekly. Non-engineers now account for 12% of all human-initiated pull requests on the production codebase (thousands per month). 1,500+ apps shipped on their internal platform in six weeks, from 800+ different builders.

They did this without a formal change management program. Without a mandatory training curriculum. Without a master plan. They built infrastructure, raised expectations, removed constraints, and watched it compound.

---

## The Problem

Ramp hit 99% adoption of AI tools across the company early on. On paper, mission accomplished. In practice, most people were stuck.

The models were not the bottleneck. The people were not the bottleneck. The [harness](/docs/concepts/harness-engineering) was the bottleneck. Terminal windows, npm installs, MCP configurations, environment setup: all of it was too much for most employees to configure on their own. The few who pushed through had wildly different setups with no way to share what they had learned.

Ramp had created urgency without providing infrastructure. The result: AI's true upside was limited to the people who already knew how to configure it. Everyone else was driving a Ferrari with the handbrake on.

---

## Glass: The Internal AI Suite

So they built Glass, their own AI productivity suite built on Anthropic's Claude Agent SDK. A team of four built it in under three months. 700 daily active users within a month of launch.

### Everything Connects on Day One

Glass comes auto-configured on install. Employees sign in once via SSO and 30+ tools light up: Salesforce, Snowflake, Gong, Slack, Notion, Google Workspace, Figma, plus Ramp's own internal products. No setup guide. No tickets to IT. If the user has to debug, they have already lost.

This is [minimum viable infrastructure](/docs/concepts/minimum-viable-infrastructure) done right. When a sales rep asks Glass to pull context from a Gong call, enrich it with Salesforce data, and draft a follow-up, it works because everything is already connected.

### Shared Skills Through the Dojo

The biggest innovation is their skill marketplace, called the Dojo. Skills are markdown files (exactly the [instruction files](/docs/concepts/instruction-files) pattern) that teach an agent how to perform a specific task.

When someone on the sales team figures out the best way to analyze Gong calls, break down competitive mentions, and draft battlecards, they package it as a skill and give that superpower to every rep. A CX engineer builds a Zendesk investigation workflow that pulls ticket history, checks account health, and suggests resolution paths. Through the Dojo, the entire support team levels up overnight.

Over 350 skills have been shared company-wide. They are Git-backed, versioned, and reviewed like code. The marketplace is the flywheel: every skill shared [raises the floor](/docs/concepts/raise-the-floor) for everyone.

The Dojo includes a built-in AI guide called the Sensei that looks at which tools you have connected, what role you are in, and what you have been working on, then recommends the skills most likely to be useful. A new hire does not browse a catalog of 350 skills. The Sensei surfaces the five that matter most on day one.

### Persistent Memory

When users first open Glass, the system builds a full memory layer based on their authenticated connections. Every chat session has context on the people they work with, their active projects, relevant Slack channels, Notion documents, and Linear tickets.

A synthesis pipeline runs every 24 hours, mining previous sessions and connected tools for updates. Glass adapts to the user's world without them re-explaining things every session. This is [context engineering](/docs/concepts/context-engineering) and [compounding docs](/docs/concepts/compounding-docs) at the organizational scale.

### Always-On Automation

Glass turns your laptop into a server. Schedule automations that run daily, weekly, or on custom cron, and post results directly to Slack. A finance team lead pulls yesterday's spend anomalies every morning at 8am and posts a summary to the team channel.

You can create Slack-native assistants that listen and respond in channels using your full Glass setup: integrations, memory, and skills included. For long-running tasks, Glass has a headless mode: kick off a task, walk away, approve permission requests from your phone. This is the [always-on agents](/docs/concepts/always-on-agents) pattern in production.

### Workspace, Not Chat Window

Most AI products give you a single conversation thread. Glass gives you a full workspace built around split panes. Tile multiple chat sessions side by side, open documents, data files, and code alongside your conversations. The layout persists across sessions. This is [flow-state infra](/docs/concepts/flow-state-infra): the product is designed around how real work actually happens.

---

## The Playbook: How They Got the Whole Company Building

Glass was the infrastructure. But the cultural transformation required more than a tool. Ramp's CPO Geoff Charles documented the full playbook. Here is what they learned.

### The Proficiency Ladder

Ramp thinks about AI proficiency in four levels:

| Level | Description | What it looks like |

|-------|------------|-------------------|

| L0 | Sometimes uses ChatGPT. Has not changed any workflows. | If you are here and not self-starting, you will most likely not be at the company. |

| L1 | Built custom GPTs, used Notion agents, dabbled in Claude Code. | Starting to see what is possible but has not compounded it yet. |

| L2 | Built an app that automates part of their job. Committed code or contributed feedback. | This is where things get real. |

| L3 | Systems builders. They build the infrastructure that levels up everyone else. | Force multipliers. |

This maps closely to the [Four Levels of Applied AI for Existing Businesses](/docs/concepts/four-levels-of-applied-ai-for-existing-businesses). Ramp's job is to get everyone up the ladder. Three things make that possible:

1. **Build tools that meet people where they are.** They shifted the whole company to Claude and Notion AI connected to workplace tools. Low technical bar, immediate benefit. That moves L0 to L1.

2. **Raise expectations as tools mature.** AI proficiency moved into hiring screens, onboarding, and performance conversations. That pushes L1s to L2.

3. **Match the mandate to the tooling.** If you raise expectations before the tools can deliver, you burn credibility and people stop listening.

### The Hackathon

Ramp hosted the largest AI hackathon ever: 700 participants across sales, CX, legal, marketing, and finance, coached by 100 of their most capable engineering and product teammates. They shipped more in a week than Ramp previously could in a year. This was [the encounter](/docs/concepts/the-encounter) at massive scale: hundreds of people experiencing what AI can do firsthand, all at once.

### Embrace Creative Destruction

Many of the tools Ramp shipped in January 2026 were already obsolete by April, replaced by better versions, often from the same builders. They got comfortable with a shelf life of weeks, not months.

Their data democratization journey tells the story:

- **Phase 1:** Notion AI was the best option, so they piped data into Notion databases.

- **Phase 2:** They launched Ramp Research, a Slack-based Snowflake research tool.

- **Phase 3:** As coding agents matured, they encoded Snowflake research into skills those agents could use directly.

- **Phase 4:** Now they are making data research interactive and self-improving.

Each generation opened doors the previous one could not. Each former generation was quietly sunset. People are not attached to their tools. They are attached to their problems. When a better way to solve the problem shows up, they grab it.

### Build from the Center, Drive from the Spokes

Ramp got the org design wrong before they got it right.

First instinct: centralize. One small team builds tools for the whole company. Demand outstripped capacity immediately. Then they swung decentralized: every team builds their own things. Tons of redundant re-learning.

The answer was both:

- **A small central team** builds the platforms, connectors, and plumbing across LLMs, data, knowledge, and workflows. They also manage training, enablement, and change management.

- **Functional teams** build on top of those platforms and give feedback that drives the central team's roadmap.

The results from non-engineers:

- A risk analyst automated 16 hours per month of manual financial modeling.

- A sales ops lead replaced a spreadsheet-based comp model across three orgs in 48 hours.

- An L&D lead built a training simulator in 15 minutes.

- Someone in finance built a contract reviewer that saves 45 minutes per contract.

None of them filed a ticket. They found their own pain, prototyped a fix, and pulled engineering in when it was time to go to production (when that was even necessary).

### Make It a Competition

Ramp built an internal leaderboard tracking AI usage across every team and individual. Sessions run, skills used, apps shipped, tools connected. Visible to everyone.

The leaderboard created three dynamics:

**Healthy peer pressure.** Nobody wants to be at the bottom. When you can see that your peer on another team is running 3x more sessions and shipping tools that save their team hours, you do not need a mandate to start building.

**Manager accountability.** Team-level rankings made it impossible for managers to ignore AI adoption. If your team is in the bottom quartile, that is a conversation you are going to have.

**Discovery through emulation.** The leaderboard is not just a scoreboard. It is a map. When you see someone at the top, you want to know what they are doing. You look at their skills, their workflows, their apps.

### Remove Every Constraint

The number one way companies kill AI adoption is by treating it like a procurement decision. Budget approvals. IT reviews. Token limits. Connector requests sitting in a queue. Every one of these is a wall between your people and their breakthrough moment.

Ramp took the opposite approach:

**Infinite learning budget.** If you demand ROI on every token before people have even learned to use the tools, you will never get adoption. The payoff comes from the compounding, not from day one.

**No token limits or access restrictions.** No caps on usage. No tiered access based on role. Everyone gets the same tools, the same models, the same access. The people who surprised them most were the ones they would have never given access to under a traditional approval process.

**Pre-connected integrations.** An AI agent is only as useful as what it can access. If people have to file a ticket and wait two weeks for IT to approve a Salesforce connection, they lose momentum and never come back. 30+ tools connected on day one.

The cost math that should reframe the conversation for any CFO: token consumption per employee today is not even close to double-digit percentages of their salary. But if someone is 2x more productive with AI, you should be willing to spend their entire salary again in tokens. If you have agents that can do 10x more work than a person, why would you not pay them twice as much as that person?

### AI Proficiency as a Hiring Requirement

Ramp now has an absolute requirement for anyone joining the company to be proficient with AI tools. No exceptions. For PM candidates, there is a dedicated interview session: build me a product, show me how you built it, walk me through how it works. It is a full prototype, not a slide deck. If you cannot demonstrate that you have internalized these tools, you do not clear the bar.

AI proficiency also moved into performance management. It is not optional. It is how Ramp evaluates whether people and teams are operating at their potential.

---

## Why They Built Instead of Bought

Ramp's reasoning for building in-house maps directly to the [sovereignty](/docs/concepts/the-sovereignty-stack) argument:

**Internal productivity is a moat.** The companies that make every employee effective with AI will move faster, serve customers better, and compound advantages their competitors cannot match. You do not hand your moat to a vendor.

**Speed.** When you own the tool, you see exactly where people get stuck and ship fixes the same day. Every session generates signal about how non-engineers actually learn to use AI: which skills get adopted, where people break through, what separates someone who uses it once a week from someone who uses it every day.

**It informs the external product.** Many of the problems Ramp solves for internal users translate directly to customers. Solving these problems internally gives them conviction about what works before they ship it.

---

## What This Validates

The Ramp story validates, at corporate scale, nearly every core AAS concept:

| AAS Concept | Ramp Implementation |

|-------------|-------------------|

| [Harness Engineering](/docs/concepts/harness-engineering) | "The models are good enough, the harness isn't." The headline of the entire project. |

| [Instruction Files](/docs/concepts/instruction-files) | 350+ markdown skill files, Git-backed and versioned. |

| [Raise the Floor](/docs/concepts/raise-the-floor) | The Dojo skill marketplace. One person's breakthrough becomes everyone's baseline. |

| [Context Engineering](/docs/concepts/context-engineering) | Auto-built memory from authenticated connections. |

| [Always-On Agents](/docs/concepts/always-on-agents) | Scheduled automations, Slack assistants, headless mode. |

| [Flow-State Infra](/docs/concepts/flow-state-infra) | Workspace with split panes, persistent layout, inline rendering. |

| [The Sovereignty Stack](/docs/concepts/the-sovereignty-stack) | Built in-house because internal AI infra is a competitive moat. |

| [Self-Improving Enterprise](/docs/concepts/self-improving-enterprise) | The Dojo flywheel plus creative destruction: tools improve weekly. |

| [The Encounter](/docs/concepts/the-encounter) | "The product is the enablement." 700-person hackathon as mass encounter. |

| [Four Levels](/docs/concepts/four-levels-of-applied-ai-for-existing-businesses) | L0-L3 proficiency ladder mirrors the AAS levels framework. |

| [See Your Own Thinking](/docs/concepts/see-your-own-thinking) | Memory system gives every employee a thinking partner with full context from day one. |

| [Your Two Futures](/docs/philosophy/your-two-futures) | Ramp chose Future A. The results make the alternative unthinkable. |

---

## The Playbook for Every Company

You do not need to be Ramp. You do not need a team of four engineers or a 700-person hackathon. But you need to understand that this is the new bar. The companies that suit up their entire workforce this way will compound advantages that companies still debating their "AI strategy" cannot match.

Here is what any company can take from Ramp's approach:

**1. Start today, not with a plan.** Ramp did not have a master plan. They had a culture that rewards speed and a leadership team that said: AI usage is an expectation, not an experiment. That clarity alone moves organizations further than any strategy deck.

**2. Build the infrastructure that removes friction.** Pre-connect your tools. Eliminate token limits. Kill the IT approval queues that stand between your people and their breakthrough moment. If your employees have to debug a setup before they can use AI, you have already lost.